What Raising for ngram Taught Me About How Investors Think NowIssue 55 : Fundraising changed fast. Here's a founder who lived through that shift.Anish didn’t go into fundraising for ngram expecting it to be a lesson in how fast the market had changed. He went in expecting what most technical founders expect hard questions about the technology, confidence questions about the team, maybe some pushback on market size. What he got was different. Not harder, exactly. Just different in a way that took a few meetings to fully understand. Investors weren’t skeptical about whether ngram could build the product. They were asking questions he hadn’t prepared for about GTM, about cost structure, about what would still be defensible in two years when the model layer had moved again. The fundraising environment had shifted underneath the founders who built in 2023. This is the story of one founder who raised through that shift and what he learned about how investors actually think now. From model magic to business mechanicsAnish and his co-founder Devadutta started building ngram an AI video creation platform that takes what companies already have, a website URL, a doc, a screen recording, a prompt, and turns it into polished, on-brand video for product launches, marketing campaigns, and demos at a moment when AI demos still moved rooms on their own. By the time they were deep in investor conversations, something had shifted underneath them. The demo still mattered it could open a meeting and set a tone but it had stopped being the thing that closed conviction. What investors were now working toward, methodically, across every serious meeting, was a different question: does the business behind the product actually work? The question had shifted from “is this impressive?” to “can I underwrite this company?” Most founders building in 2023 didn’t notice until they were already in the room. What investors actually underwrite nowThe most clarifying moment in Anish’s fundraising process came from a fund he expected to close. A top-tier Tier-1 VC had moved fast after their first conversation with ngram. Follow-ups came quickly. The energy felt right. Coming out of those early calls, Anish thought they were close not just to a yes, but to the right yes, from people who understood what they were building. Then the second meeting went differently.

ngram stayed on their path. The conversation with that fund didn’t go further. But the experience became one of the most useful data points from the entire raise because it made the difference between enthusiasm and actual underwriting viscerally clear. What serious investors are underwriting in 2026 follows a consistent pattern. Think of it as four layers, each one harder to fake than the last. Layer 1 - Workflow Necessity : Is the product solving something that already has a budget line, or asking customers to create a new one? Investors aren’t just asking whether the problem is real they’re asking whether removing the product tomorrow would break something. Tools that live at the edge of a workflow get cancelled when priorities shift. Tools that sit inside a workflow become renewal conversations. Layer 2 - Product Proof : Is usage happening without the founder pushing it? Investors can tell the difference between a product people use because it was recommended and a product people return to because it has become part of how they work. Layer 3 - Economic Proof : Does the cost-to-serve behave predictably as usage scales? Every query in an AI product has a real cost. Unlike classic SaaS where margins flatten, AI margins can deteriorate fast when power users or usage spikes hit. Investors are modeling this now, and they expect founders to be modeling it too. Layer 4 - Defensibility Proof : What compounds with usage? The model isn’t the moat it changes too fast. What investors are looking for is something that builds internally as the product grows: proprietary data, workflow embedding, distribution advantage, product depth that a well-funded competitor can’t replicate in a year. How AI risk is priced and why it’s different from SaaSThere’s a phrase you hear less often than you should in early-stage AI conversations: COGS is back. Classic SaaS companies enjoyed structurally high, stable gross margins. Infrastructure costs flattened quickly. Investors spent a decade evaluating software businesses where the cost side of the equation was almost predictable - which is how CAC/LTV ratios and net revenue retention benchmarks became the standard vocabulary. Those frameworks took SaaS ten years to develop. In AI, they arrived in roughly 24 months. By late 2024, investors were already asking seed-stage AI founders about inference cost sensitivity and compliance readiness. For ngram, this showed up in a specific way. Both founders come from engineering backgrounds, so investors quickly satisfied themselves on the technology question. What took longer and what showed up as the deeper diligence was product quality as a cost signal. Investors who brought in technical partners and researchers to validate ngram’s output weren’t just doing product diligence. They were also checking whether the quality promise required expensive compute to deliver, because if it did, that had direct implications for the margin trajectory. The risks investors are actually pricing into every AI deal right now:

What investors are reading across all of these isn’t whether the risks exist every AI company carries some version of them. They’re reading whether the founder has thought them through. The ones who have built differently. The ones who haven’t tend to show it mid-diligence in ways that are hard to recover from. Why demos are not enough anymoreA strong demo in 2026 gets you the meeting. That’s genuinely still worth something the first conversation, the attention, a reason to follow up. What it stopped doing is carrying conviction on its own. Investors have sat through enough impressive AI demonstrations to have developed a specific kind of pattern recognition. They can see the difference between a product that performs well in a controlled environment and a product that holds up inside a real workflow, used by someone whose job depends on it working. For Anish, the GTM diligence was where this showed up most directly. The technology questions got answered quickly. What took more conversation was something different.

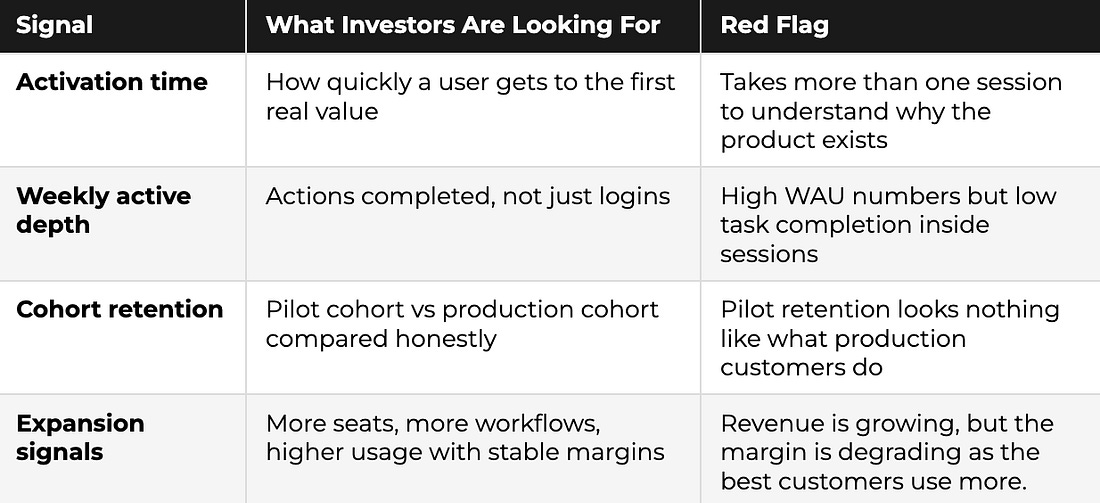

These are not questions about the AI. They’re questions about whether the business has a path that doesn’t depend on the founder being in every room. A strong demo proves the product can do the thing. It doesn’t prove that users come back next week without prompting. It doesn’t prove that the workflow shown in the demo is the workflow customers use in production. The things that actually move the room now are less visual and harder to fake. How fast does a new user get to the moment where the product clicks? Does it behave the same way under real conditions as it did in the demo? Who is coming back the following week without any prompting? And is there a repeatable path to more users, or was the initial cohort the result of a one-time push? What traction actually means nowTraction is one of the most misread signals in early-stage AI fundraising and Anish saw this play out differently depending on which investor was across the table.

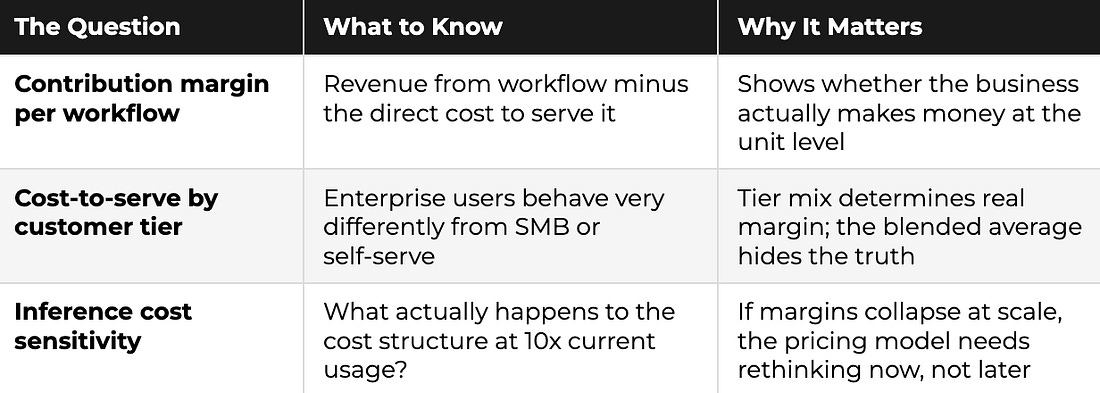

That is true. And it is also the thing that gives founders false confidence about where they actually stand. The ones who moved through early-stage conversations fastest even without strong traction numbers weren’t the ones with the best metrics. They were the ones who had replaced traction data with traction clarity. Not just “here’s our growth” but “here’s exactly who uses this, how they found us, what they did in the first session, and why they came back.” The hardest line investors draw is between pilot and production. Pilot budget is not workflow budget. Early revenue from pilots is a hypothesis. Production adoption is evidence. The traction scorecard below is what investors are actually running in diligence and the column that trips most founders up is the last one. The unit economics page investors now expectAt the seed stage, investors don’t expect perfect margins. What they do expect is that founders have thought through their cost structure honestly because the ones who haven’t are the ones who hit a wall when the business scales. For Anish, this was one of the areas where the fundraising process itself was clarifying. The questions investors kept returning to about output quality, about cost per workflow, about what the margin picture looked like as ngram grew weren’t hostile. They were a roadmap for what the business needed to understand about itself. Three numbers come up in almost every serious AI diligence conversation right now: The investor test is simple: if the math doesn’t work at 10 customers, it won’t fix itself at 1,000 it will just become a bigger problem. Founders who can walk through these numbers honestly, even when they aren’t great, earn more trust in diligence than founders who haven’t modeled them at all. Defensibility in early-stage AI: what actually countsThe question Anish heard in almost every serious investor meeting came in different forms, but it was always asking the same thing: what gets better as more people use ngram? The model is not the answer to that question model performance improves on someone else’s roadmap and is available to any competitor with an API key. What investors are actually looking for is the asset that builds independently: proprietary data, workflow embedding, distribution advantage, product depth a well-funded competitor can’t replicate quickly.

For ngram specifically, the answer lives in what accumulates as more teams use the platform. The brand assets, the tone, the approved visual language, the library of past videos all of it builds into an increasingly specific picture of how a company tells its story. A competitor starting fresh with the same team would have none of that context. The output gets better the more ngram understands the company behind it. That is what compounding looks like in practice not a feature, but an asset that deepens with use. The fundraising narrative that actually works and a mistake worth learning fromOne of the clearest lessons from Anish’s process came from an unexpected direction. He’d gone into the raise thinking geographic diversity in the investor base was a strategic asset bringing in investors from across Asia and Europe who could provide local support as ngram expanded into new markets.

The broader lesson: at seed, focus beats optionality. Every time. The same principle applies to the fundraising narrative itself. The pitches that work in 2026 don’t try to cover everything. They follow a tight, honest arc: Here is the specific workflow we’re improving. Here is the measurable lift we create. Here is why we can deliver that repeatedly. Here is why the margin path improves as we scale. One sentence version: “We convert X hours of work into Y outcomes at Z reliability, with a margin path that improves as we scale.” Talking to Anish about his process, I kept coming back to this: the founders who moved through diligence fastest weren’t the ones who had the best answers in the room. They were the ones who had already asked themselves the uncomfortable questions before they walked in. So rather than a framework, here’s how I’d actually think about preparation , seven things worth sitting with honestly before you start taking meetings.

ClosingAnish came out of the ngram fundraising process with something most founders don’t talk about: a clearer picture of his own business than he had going in. The hard questions, the investor who wanted a different company, the GTM diligence that surprised him more than the technical diligence all of it added up to a stress test that sharpened the thinking in ways that a quieter raise wouldn’t have. The market he raised in wasn’t the same one he’d started building for. The questions had moved. The bar had shifted from product capability to business durability. And the founders who moved through that environment fastest weren’t the ones with the cleanest numbers they were the ones who had already asked themselves the hard questions before an investor did. That’s the real takeaway from Anish’s process. Not a framework. Not a checklist. The founders who get funded in this environment aren’t always the ones who were ready before they started. They’re the ones who got honest during it about their costs, their defensibility, their GTM, and where the gaps actually were. The wave hasn’t stopped. It’s just gotten more honest about what it carries. See you in the next issue, – Shubham Bopche Venture Unlocked is free today. But if you enjoyed this post, you can tell Venture Unlocked that their writing is valuable by pledging a future subscription. You won't be charged unless they enable payments. |

Search This Blog

Thursday, 12 March 2026

What Raising for ngram Taught Me About How Investors Think Now

Subscribe to:

Post Comments (Atom)

No comments:

Post a Comment